How to reduce the inflated carbon footprint of AI

As machine learning experiments become more sophisticated, their carbon footprint is exploding. Now, researchers have calculated the carbon cost of training a range of models in cloud computing data centers at various locations.1. Their findings could help researchers reduce emissions created by work that relies on artificial intelligence (AI).

The team found marked differences in emissions between geographic locations. For the same AI experiment, “the most efficient regions produced about a third of the least efficient emissions,” says Jesse Dodge, a machine learning researcher at the Allen Institute for AI in Seattle, Washington, who co-led the study. .

Until now, there haven’t been good tools to measure emissions produced by cloud-based AI, says Priya Donti, a machine learning researcher at Carnegie Mellon University in Pittsburgh, Pennsylvania, and co-founder of the Climate Change AI group.

“This is great work by great authors and contributes to an important dialogue about how machine learning workloads can be managed to reduce their emissions,” she says.

Location matters

Dodge and his collaborators, which included Microsoft researchers, monitored electricity usage while training 11 common AI models, ranging from the types of language models that underpin Google Translate to vision algorithms that automatically label pictures. They combined this data with estimates of changing emissions from the power grids powering 16 Microsoft Azure cloud servers over time, to calculate training power consumption across a range of locations.

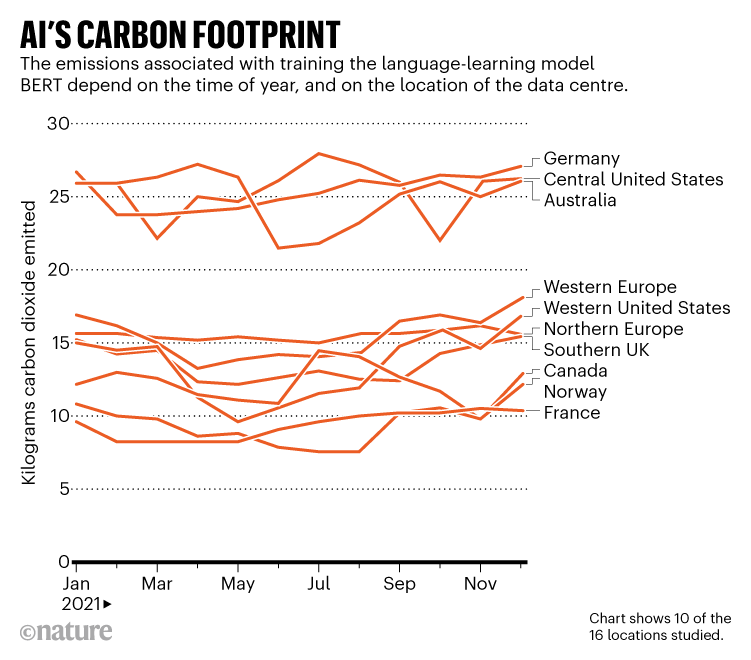

Facilities in different locations have different carbon footprints due to global variation in energy sources, as well as fluctuations in demand. The team found that training BERT, a common machine learning language model, in data centers in the middle of the United States or Germany emitted 22 to 28 kilograms of carbon dioxide, depending on the time period. of the year. This was more than double the emissions generated by the same experiment in Norway, which derives most of its electricity from hydroelectric power, or France, which relies primarily on nuclear power (see “The Carbon Footprint of AI”).

The time of day the experiments take place is also important. For example, training AI in Washington at night, when the state’s electricity comes solely from hydroelectric power, led to lower emissions than during the day, when electricity also comes from power plants in the gas, says Dodge, who presented the results. at the Association for Computing Machinery conference on fairness, accountability and transparency in Seoul last month.

The AI models also varied wildly in their shows. The DenseNet Image Classifier created the same CO2 shows like charging a cellphone, while training a medium-sized version of a language model known as a Transformer (which is much smaller than the popular GPT-3 language model, made by the company OpenAI research facility in San Francisco, California) produces around the same emissions as a typical US household generates in a year. Additionally, the team completed only 13% of the transformer training process; full training would produce emissions “on the order of magnitude of burning an entire railroad car full of coal,” says Dodge.

Emissions figures are also underestimated, he adds, because they do not include factors such as the energy used for data center overhead or the emissions needed to create the necessary hardware. Ideally, the numbers would also have included error bars to account for large underlying uncertainties in a network’s emissions at any given time, Donti says.

Greener choices

Where other factors are equal, Dodge hopes the study can help scientists decide which data center to use for experiments aimed at minimizing emissions. “This decision, it turns out, is one of the most impactful things anyone can do” in the discipline, he says. Thanks to this work, Microsoft now makes information on the power consumption of its hardware available to researchers who use its Azure service.

Chris Preist of the University of Bristol, UK, who studies the impacts of digital technology on environmental sustainability, says the responsibility for reducing emissions should lie with the cloud provider rather than the researcher. Providers could ensure that at all times, the lowest carbon data centers are used the most, he says. They could also adopt flexible strategies that allow machine learning cycles to start and stop at times that reduce emissions, Donti adds.

Dodge says the tech companies that conduct the biggest experiments should take the greatest responsibility for transparency around emissions and trying to minimize or offset them. Machine learning isn’t always bad for the environment, he points out. This can help design efficient materials, model the climate, and track deforestation and endangered species. Nevertheless, the growing carbon footprint of AI is becoming a major source of concern for some scientists. Even though some research groups are working on tracking carbon emissions, transparency “has not yet reached a community standard,” Dodge says.

“This work has been focused on just trying to get transparency on this topic, because it’s sorely lacking right now,” he says.

Comments are closed.